We’ll assume here that Logstash is listening to UDP traffic on port 2233.

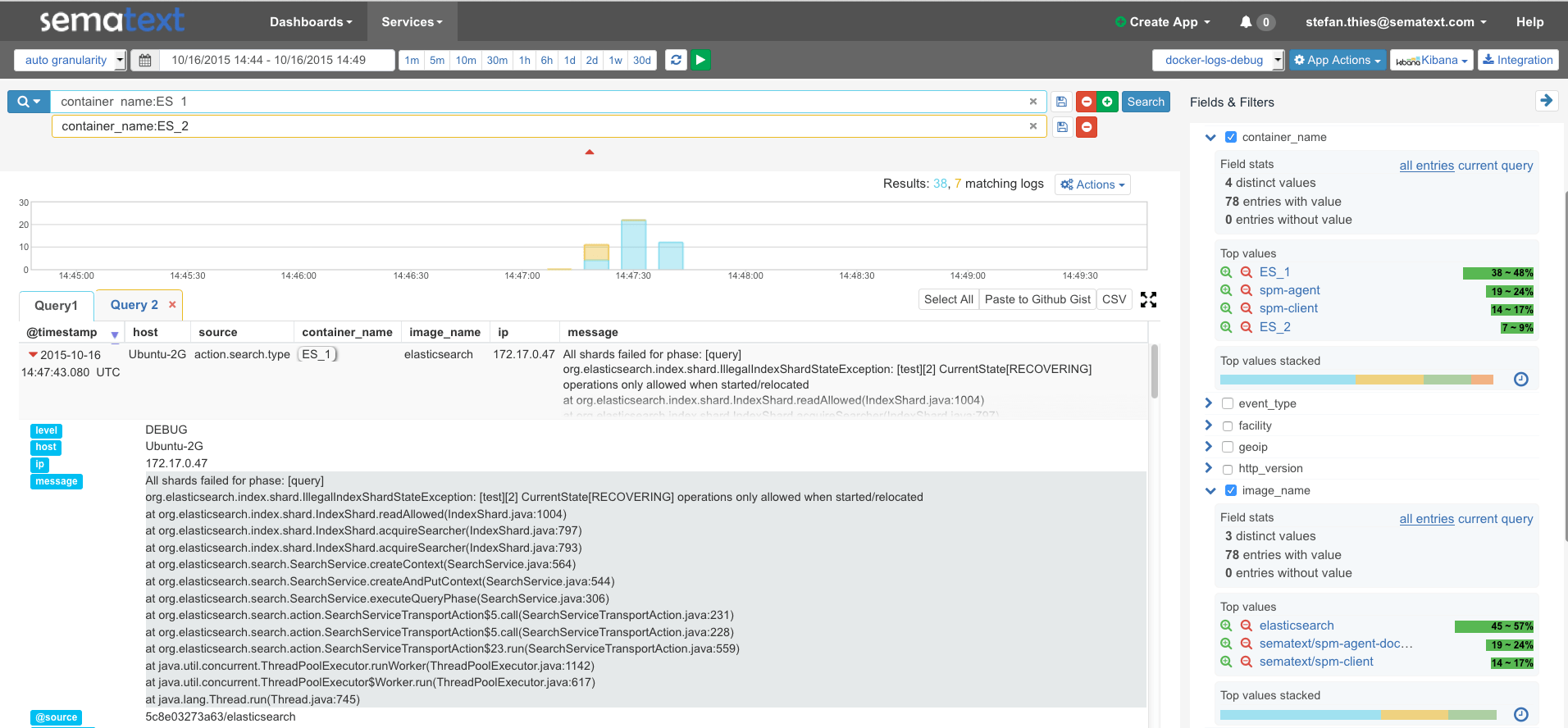

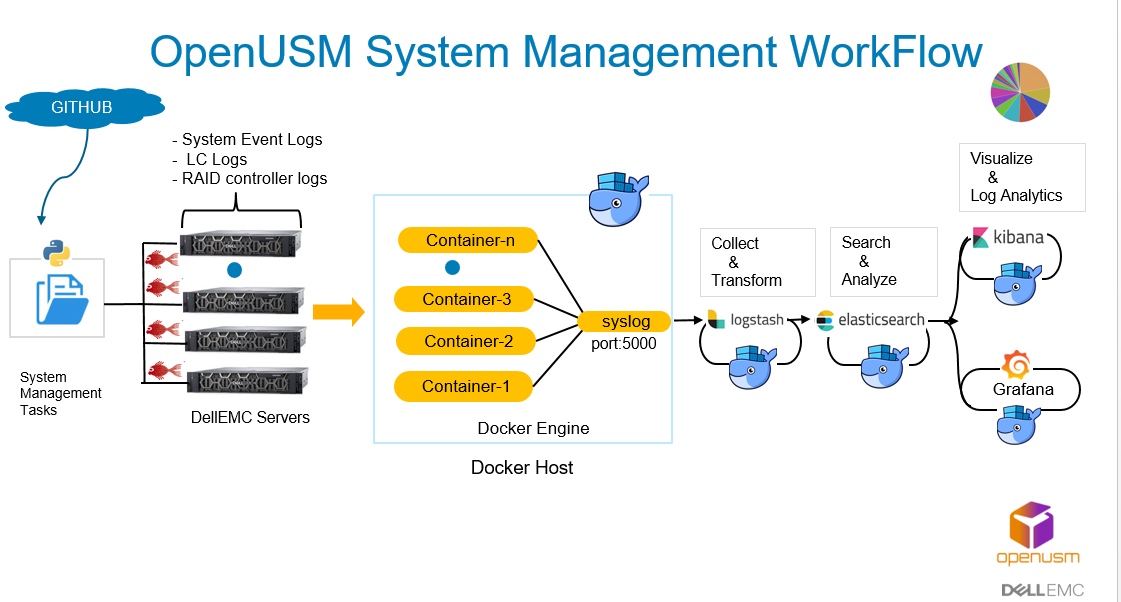

All we needed to do on the client side was start the Docker container as follows: docker run -log-driver=syslog -log-opt syslog-address=udp://localhost:2233 Because Logstash is a container monitored by Logspout, Logspout would forward all of Logstash’s logs to Logstash, causing it to spin into a frenetic loop and eat up almost all of the CPU on the box (docker stats, a very useful command which will report container resource usage statistics in realtime, was partially how I caught and understood that this was happening). This setup was useful for both development and debugging. In order to connect them to ELK, we started by setting up the ELK stack locally, and then redirecting the logs from a Docker container located on the same machine. Our clients are applications sitting inside Docker containers, which themselves are parts of AWS ECS services. This is the structure that we’re looking for. It allows for a client-server architecture for log collection, with a central log server receiving logs from various client machines.

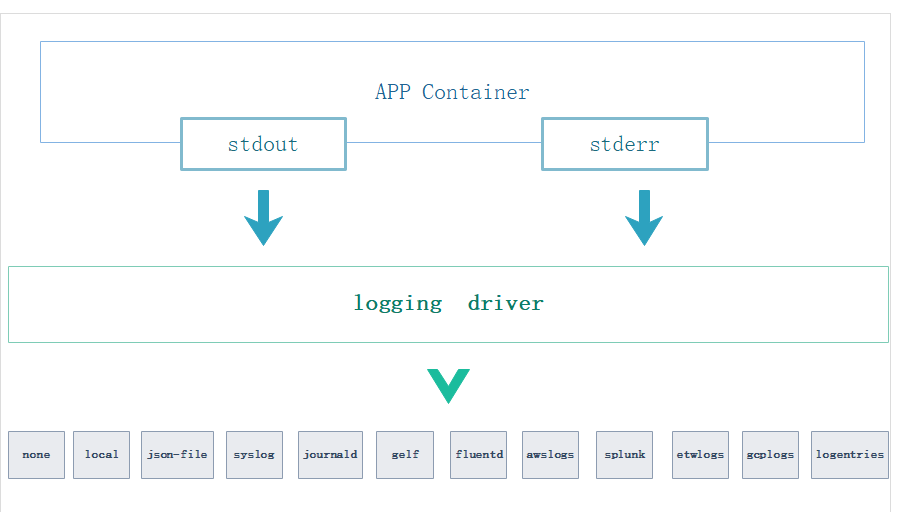

We decided on syslog, which is a widely accepted logging standard. Client-side Configurationīefore developing anything, the first decision we needed to make was to pick a logging format.

Docker syslog logstash how to#

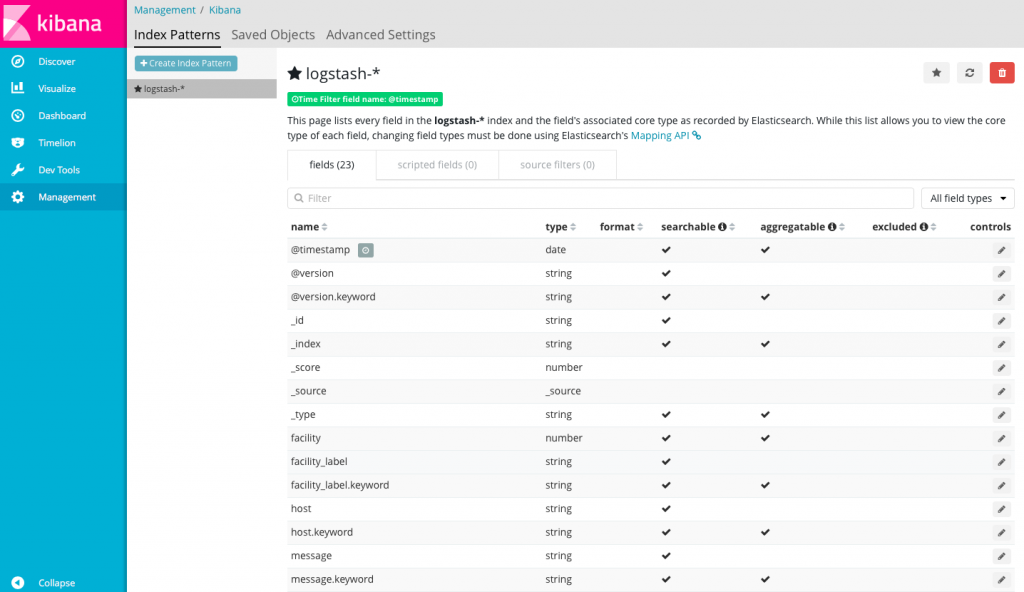

In this article, I’ll cover how to configure the client side (the microservices) and the server side (the ELK stack). Syslog server with PimpMyLogs web interface. A big chunk of Semaphore architecture is made up of microservices living inside Docker containers and hosted by Amazon Web Services (AWS), so ELK joins this system as the point to which all of these separate services send their logs, which it then processes and visualizes. I’ll introduce you to one possible solution for sending logs from various separate applications to a central ELK stack and storing the data in a structured way. This is why we decided to include the ELK stack in our architecture. Also, being able to track the number of occurrences and monitor their rate of change can be quite indicative of the underlying causes. Because of these issues, storing your log data in a structured format becomes very helpful. version: 3 services: elasticsearch: image: elasticsearch:7.9.3 environment: - discovery.

Docker syslog logstash install#

Install the stack Below you’ll find the full stack to have a working ELK stack on your docker swarm. Use the docker logs command below to display the logs for logstash1 container. In this tutorial you’ll see how to set up easily an ELK (Elastic, Logstash, Kibana) stack to have a centralized logging solution for your Docker swarm cluster. If there are any errors or issues or to check on the console output from the container, use the docker logs command. docker run -d -nethost -nameelasticsearch -p 9200:9200 -p 9300:9300 -e discovery.typesingle-node /elasticsearch/elasticsearch:7.11.2.

Even better, there’s no need for a custom configuration that depends on the container’s log location. docker logs command is used to retrieve the logs output from the container. Compared to Filebeat, Logstash can be installed on any single machine (or a cluster of them), as long as it’s reachable from the Docker images. Still, logging too much data can have the adverse effect of hiding the actual information you are looking for. One of the most frequently docker command used is docker logs. It could either be syslog or Journald on the host machine or it could be a remote destination such as Fluentd, Logstash, or one of the other remote. Sending Logstash's logs to /var/log/logstash which is now configured via log4j2.propertiesĠ7:13:08.239 INFO comprehensive logs is a massive life-saver when debugging or investigating issues. Let's install Logspout on one Docker host to collect logs from an Nginx container.